Why Your AI Agents Write Bad Code (And What We Did About It)

Six months ago you approved the Copilot or Cursor licenses. Your team was excited. Adoption looked strong. And then the complaints started showing up in your 1:1s.

Review cycles got longer. Senior engineers, the ones you were hoping to free up for high-leverage work, said the AI was slowing them down. PRs full of generated code were coming in with architecture violations: wrong service boundaries, deprecated internal libraries, dependency patterns that violated your security policy. Your board asked about ROI on the AI investment and you didn't have a clean answer. And when you looked across teams, every one of them was using AI in a different way, with no consistency and no shared standards.

You made the right call buying the tools. The gap is structural, not the technology.

The Problem Is Not the Model

Here is the uncomfortable truth: the AI agent is not malfunctioning. It is doing exactly what it was built to do, generating plausible code based on what it can see. The problem is what it cannot see.

It cannot see your architectural decision records. It does not know that you deprecated the internal HTTP client library eight months ago. It has no idea that services in the payments domain are not allowed to call services in the identity domain directly. It does not know what "good code" means in your specific organization, because nobody wrote that down in a form it can use.

The insight that changed our approach at FairMind was simple: an agent's mistake is not a failure of the model. It is a signal that your environment is missing a constraint. The work is to encode that constraint so the agent cannot make the same mistake twice.

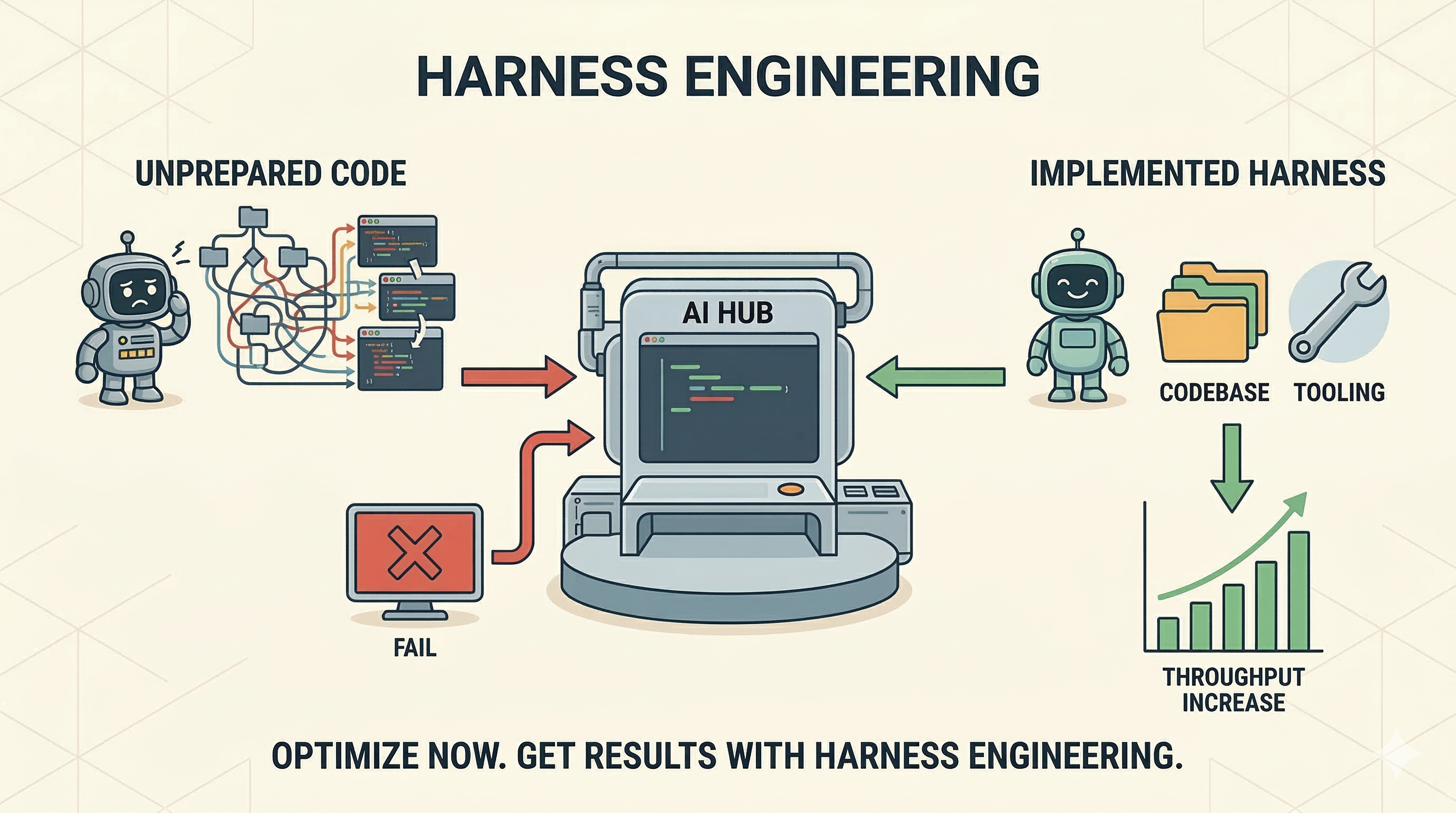

Counterintuitively, you get more capability from your agents by tightening their environment, not by relaxing it. This principle, confirmed independently by practitioners at Stripe, OpenAI, Anthropic, and LangChain, is the foundation of what the industry now calls harness engineering.

What We Actually Did

We did not arrive at our current workflow by reading a playbook. We built it through a year of failures, debates, and incremental fixes while building FairMind's own platform with AI agents at the center of the development process.

The Review Bottleneck

The first crisis was code review. About a year ago, when agent tooling was less mature, we hit a wall: agents were producing code faster than our senior engineers could review it. Instead of the productivity gain we expected, we had created a new bottleneck. The most experienced people on the team were spending their time reading AI-generated code instead of designing systems.

We debated this intensely. The initial impulse was to tighten review standards. Instead, we went the opposite direction: we built agent-assisted review. We created tools that allowed agents to perform the first pass of code review, combined with targeted automated test suites designed specifically for the patterns agents tend to get wrong. This started as an improvised, somewhat artisanal solution. It worked well enough that it became a core feature of the FairMind platform that we now offer to clients.

The Context Problem

The second problem was context fragmentation. Agents had no structured way to understand our codebase, our conventions, or our architectural decisions. They were generating plausible code in a vacuum.

We built what we call the Project Context: a structured repository that contains the full codebase (auto-updated), architectural documentation, decision records, and, critically, all artifacts that agents themselves produce. When an agent does a brainstorming session, writes documentation, or performs an impact analysis, that output is automatically saved back into the Project Context. The knowledge base grows with every interaction.

Our workflow now starts well before any code is written. I use FairMind's agents to create epics and user stories, doing brainstorming directly against the codebase and running web research to inform decisions. These stories go to the CTO and development team, who collaborate, ask clarifying questions (to me or to agents), and decompose the work into tasks, all within FairMind. By the time an agent starts writing code, the context is comprehensive and the ambiguities have been resolved.

Development Journals and CI/CD Gap Analysis

The third innovation was born from a specific pain point: when agents finished a development task, we had no reliable way to verify whether the output matched what was planned, short of reading every line ourselves.

Our solution was to force every agent to produce a "development journal" during execution: a structured log of activities performed, decisions made, files modified, and rationale for each choice. When code is pushed, a dedicated agent in our CI/CD pipeline reads these journals, compares them with what was planned in FairMind, and produces a gap analysis. The output is a comparison table: what aligns, what deviates, what needs remediation, and proposed lines of intervention. The developer reads the PR, sees exactly where the gaps are, and can complete what needs completing.

This mechanism transformed our quality assurance from a manual, time-intensive review into a structured, auditable process.

The Observability Layer

Agents sometimes develop incorrect assumptions that silently propagate through their work. We discovered this when an agent, during a codebase documentation task, decided that one of our libraries was related to video game development. It was not. The root cause: the agent had failed to access the actual codebase, and instead of stopping to report the problem, it inferred the project's purpose from the repository name and built an entire analysis on a false premise. The failure was not in the model. It was in the environment: nothing forced the agent to stop when it lacked access to critical information.

We responded with a guardrail that interrupts agents when they cannot access the codebase and forces them to ask for guidance. We also built an observable activity stream for every agent session and an editable memory system. Users can watch what agents are doing in real time, inspect their working assumptions, correct errors in the agent's memory, and restart execution from a specific checkpoint. You do not wait for the final output to discover a problem. You intervene where the reasoning goes wrong.

The Cultural Shift

Even within our team, as pioneers of this approach, the transition was not smooth. We went through a significant migration: from IDE-centric development to terminal-based agent workflows using Claude Code and similar tools, with IDEs as backup for advanced analysis and spot-checking. The developers who initially said "I can write this faster myself" were right, in specific cases. Complex debugging still benefits from human intuition. But on new feature development, the productivity difference became undeniable, and the team adapted.

The Results

Using these practices, our development team now sustains a throughput of up to 10 PRs per developer per week. On new feature development, the productivity improvement is an order of magnitude compared to our pre-agent baseline. On complex debugging, the advantage is more contained (20-30%), though the log analysis and problem identification phase improved by 5-7x thanks to agents that analyze cloud application logs in real time and cross-reference them with the codebase.

Two outcomes matter more than speed: we eliminated merge conflicts on feature branches entirely, and significantly reduced production incidents. Both are direct consequences of integrating agents into our CI/CD pipeline and having the team use them systematically for every verification step.

These numbers are specific to our context, our codebase, and our team dynamics. The multipliers will vary. What generalizes is the structural approach: invest in the environment, and agent productivity follows.

For reference, the industry data tells a consistent story. Stripe merges over 1,000 agent-generated PRs per week. Leading AI labs have documented sustained throughput of multiple PRs per engineer per day. The contexts are very different, but the structural patterns are the same.

Questions to Ask Your Team Right Now

You do not need a consultant to start diagnosing where you stand. These five questions will surface the structural gaps. We know, because these are the questions we asked ourselves.

Can your AI agents find your architectural decision records? Not whether you have ADRs. Whether they are in a location and format an agent can use in-session. If the answer is "they're in Confluence somewhere," your agents are operating without that context. When we built our Project Context, the single biggest improvement came from making existing documentation agent-accessible, not from writing new documentation.

How fast does your CI give feedback to an agent? If the answer is "ten to fifteen minutes," your agents are operating in the dark. Feedback that arrives after the session is context-free. Target under five minutes for agent-relevant checks, and under thirty seconds for linting. Our shift-left controls caught issues that would previously have surfaced only in production.

Do you have documented boundaries agents must respect? Service ownership rules, deprecated libraries, security boundaries, compliance constraints. Are these encoded as linter rules and automated checks, or are they in a wiki that agents cannot read? We encoded ours as agent skills and hooks that run before code is committed. The constraints are structural, not advisory.

Who reviews AI-generated code and what do they check? If senior engineers are reviewing AI-generated code the same way they review human-written code, you have inverted the value proposition. We solved this by layering agent-assisted review with targeted automated tests, then reserving human review for architectural decisions and edge cases. The review bottleneck disappeared.

Is agent output traceable to intent? If a PR is merged and something goes wrong, can you trace back to what the agent was supposed to do, what it actually did, and where it deviated? Without a mechanism like our development journals and gap analysis, agent-driven development is a black box. Auditability is not a nice-to-have; it is what makes the process trustworthy.

Making This Systematic

The five questions above will show you where the gaps are. Closing them is a different kind of work than most engineering organizations have done before. It is environment design: documentation architecture, constraint systems, feedback loop engineering, role definition, and organizational pattern-setting. It sits at the intersection of platform engineering, developer experience, and organizational design.

FairMind built a consulting framework for this work, starting from our own internal practice and now enriched through client engagements. Our consultants assess engineering organizations across 72 criteria and 7 dimensions, covering the full environment: context systems, architectural constraints, CI/CD feedback loops, agent workflow patterns, measurement, and team structure. The assessment takes two to three weeks and produces a prioritized roadmap based on where your organization actually stands.

You do not need to work with FairMind to make progress. The questions above are a real starting point. But if you want to understand your organization's position precisely, and get a structured path to close the gaps, that is what the assessment is designed to do.

The Bottom Line

The senior engineers who say AI slows them down are not wrong. In an unstructured environment, agents generate plausible code that violates invisible rules, and fixing that code falls on the most experienced people in the room. The problem is not the AI. The problem is that the rules are invisible.

We did not get to our results by deploying better models. We did it by building an environment where the rules are encoded, the constraints are structural, the feedback loops close in seconds, and every agent session produces a traceable record of intent versus execution. The agents are capable. The environment is the lever.

Harness engineering is the discipline of building that environment deliberately. It is the work that converts AI tool adoption into AI-driven productivity.

If you want to understand where your organization stands, reach out: info@fairmind.ai

For a deep-dive into the discipline and the seven-dimension assessment framework, read also: Harness Engineering: The Discipline That Determines Whether AI Agents Ship or Stall

Discover how FairMind can help your organization: Harness Engineering Assessment

Ready to Transform Your Enterprise Software Development?

Join the organizations using FairMind to revolutionize how they build, maintain, and evolve software.